What Is Zero-Shot Reasoning in AI?

Mon Nov 17 2025

Introduction

As AI models grow in size and capability, one of the most intriguing skills they now exhibit is zero-shot reasoning : tackling tasks they've never seen during training, without any examples in the prompt. From natural language classification and image recognition, to complex decision-making, zero-shot reasoning is redefining how we think about general, adaptable AI. In this article we’ll explore what zero-shot reasoning is, how it works, why it matters in the age of AI, its strengths and limitations, and how to use it in your own projects.

What Is Zero-Shot Reasoning?

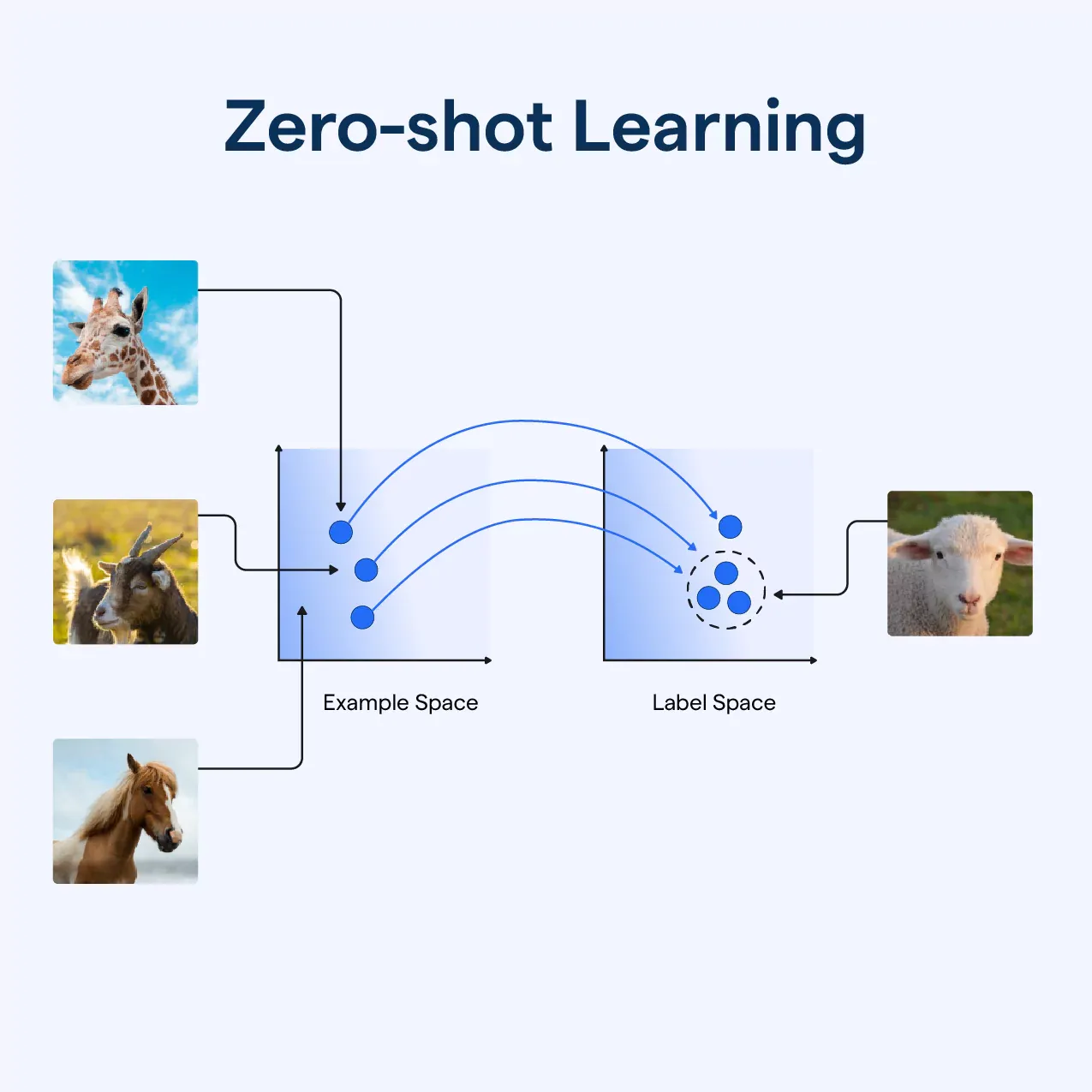

Zero-shot reasoning refers to the ability of an AI model to perform a new task without being explicitly trained on examples for that task. In simpler terms: you give it a prompt, and it must apply its general knowledge and reasoning capabilities to respond : no fine-tuning, no labelled examples. This concept builds on zero-shot learning, where models classify unseen classes using auxiliary information.

In the context of large language models (LLMs), zero-shot reasoning usually means you instruct the model directly in natural language. For example:

“Classify this text as positive, neutral or negative: ‘I thought the movie was okay.’”

Here the model must infer sentiment without having seen prior examples labelled for this prompt.

How Does It Work?

Zero-shot reasoning relies on several key mechanisms:

1. Pre-training knowledge

Large AI models (like GPT, Claude) have been trained on massive, diverse datasets. They learn patterns, semantics, and inferential structures across language or vision. That broad learning enables them to generalise to new tasks.

2. Instruction tuning & alignment

Although zero-shot means no examples for the specific task, many models are instruction-tuned so they understand prompts that ask for a task. Instruction tuning improves zero-shot performance dramatically.

3. Prompt engineering

How you phrase the instruction matters. Clear tasks, labelled instructions, and good context give the model the best chance. For example, telling the model “Translate into French:” or “Summarise the following text:” removes ambiguity.

4. Generalisation of reasoning

The model uses its internal representations to map a new task to known concepts. That might be: “I have seen patterns of things being positive or negative; apply that here.” This subtle reasoning is what makes zero-shot possible.

Why It Matters in the AI Era

Zero-shot reasoning is significant for several reasons:

Scalability

Training a model for every specific task is costly and time-consuming. With zero-shot ability, you can deploy models for new tasks rapidly without collecting annotated data.

Flexibility

Models with zero-shot reasoning can adapt to new domains, languages or tasks that weren’t anticipated during training : making them far more general and useful.

Speed of innovation

For businesses and developers, zero-shot means less dependency on fine-tuning datasets, and more ability to iterate quickly. You can prototype tasks by just writing a prompt.

Reduced dataset bottlenecks

Many tasks lack large labelled datasets. Zero-shot opens up applications in under-represented languages, rare domains or niche tasks where data is scarce.

Better alignment with human-style reasoning

Humans often solve problems we’ve never explicitly seen before by using knowledge, inference and analogy. Zero-shot reasoning brings models a step closer to that kind of flexibility.

Use Cases of Zero-Shot Reasoning

Here are some practical applications:

- Text classification: Sentiment, intent, topic classification without labelled examples.

- Translation: Translating between languages the model might not have seen detailed examples of.

- Summarisation & explanation: Summarising a paragraph or explaining a concept the model hasn’t been fine-tuned for.

- Image recognition for unseen classes: In vision, models classify images of objects they never encountered, using descriptors of those classes.

- Conversational agents adapting to new tasks: A chatbot handling new workflow types without retraining.

Strengths & Limitations

Strengths

- No need for task-specific labelled data.

- Rapid deployment and iteration.

- Good for broad, flexible tasks that align with model capabilities.

Limitations

- Performance may lag compared to fine-tuned models on narrow tasks.

- Ambiguous or highly specialised tasks may confuse the model.

- Requires strong prompt design to steer the model correctly.

- Model’s pre-training may not cover some domains, languages or niche tasks.

- Zero-shot reasoning isn’t perfect “human-level” reasoning : it may still make errors or hallucinate.

How to Use Zero-Shot Reasoning Effectively

Here are practical tips:

Provide clear instructions

Use explicit phrasing like:

“Translate the following into German:”

“Categorize the following sentence as Fact or Opinion: …”

Define output format

If you expect a specific structure, ask for it.

“Provide the answer in a JSON object with keys

answerandreason.”

Leverage context when helpful

Even without examples, context or definitions can help:

“In the following sentence, identify the action and the actor.”

Test and iterate

Try multiple prompts and compare results. Sometimes minor tweaks (tone, phrasing) yield big gains.

Use zero-shot as a baseline

If performance is insufficient, you might move to one‐shot or few-shot prompting, or fine-tuning. Zero-shot is often the starting point.

The Future of Zero-Shot Reasoning

In 2025 and beyond, expect to see:

- More powerful general models with stronger zero-shot capability as model size and training data expand.

- Hybrid systems combining zero-shot reasoning with retrieval-augmented generation (RAG) for factual accuracy.

- Chain-of-thought and step-by-step reasoning applied zero-shot to complex tasks.

- Multi-modal zero-shot reasoning (vision + language + audio), where models interpret at once.

- Integration into agents: autonomous systems that reason on new domains, new tasks in zero-shot style.

- Better prompting techniques and tooling to make zero-shot easier for non-experts.

Apptastic Insight

Zero-shot reasoning represents a foundational shift in how we deploy AI. Instead of building narrowly fine-tuned models for each new problem, we increasingly rely on broad, flexible models that reason across tasks. For product builders, developers and researchers, mastering zero‐shot prompting and reasoning is now a prime skill in 2025.

In short: when your AI can reason about something it’s never seen, you move from training for every task to instructing for every task. That’s how the future of AI works.